Is Your Winning Variation Actually Leading to More Cancellations? How to Use Data to Find Out

By Zach Bulygo

Imagine this: you just found a huge win on an A/B test. Your variation doubled signups over the original. So you launch your variant to 100% of visitors. Pop the cork! Here come the signups.

Fast forward six months, and you have a surge in cancellations. Your customer support team can’t figure it out, and there have been no major changes in your product. You are left puzzled and frustrated.

Then you realize something. For the past six months, people have been signing up through the variant. Could this be the cause of the cancellations? If you use Kissmetrics, you can get the answer.

Identifying Whether a Variant Led to More Cancellations

Using Kissmetrics, you can find out if an A/B test that initially delivered more signups ultimately led to more cancellations. It doesn’t matter if the test was six months ago. You can still go back retroactively and see how the test performed and whether the variation brought more cancellations. Here’s how we do it.

The Funnel Report

A funnel report is used to track how many people move from one step of your website to the next. Marketers traditionally use a funnel report to see where visitors are dropping off in their path to purchase.

But we can use the Kissmetrics Funnel Report for other purposes, too. One of them is to see which test variation led to more cancellations. To do this, we’ll create a funnel for just two steps – signed up and cancellation. When you use Kissmetrics, you may name your events a little differently, but if you’re a SaaS company, you’ll need to know when people sign up and when they cancel.

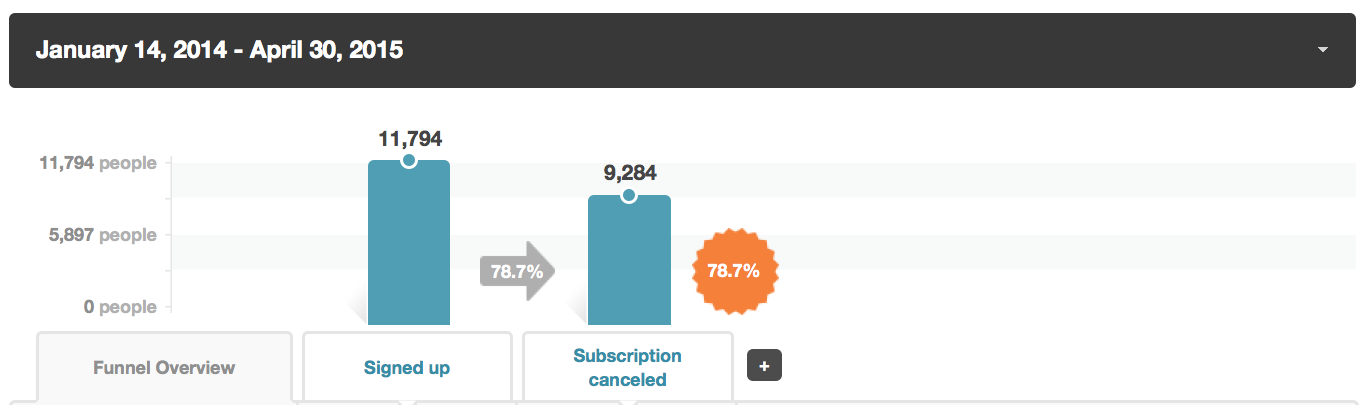

Here’s how our funnel looks:

During our selected time range, we had 11,794 signups. Of those 11,794, nearly 9,300 canceled. To see how each variant performed, we’ll have to segment our traffic. “Segment” is a fancy, analytical way of saying “group.” So if you came to our site and saw the variant, you’d be put in the variant group/segment.

Here’s the funnel, segmented by the A/B test:

We can ignore “None.” These are people who were not in the A/B Test. They didn’t see the variant or the original.

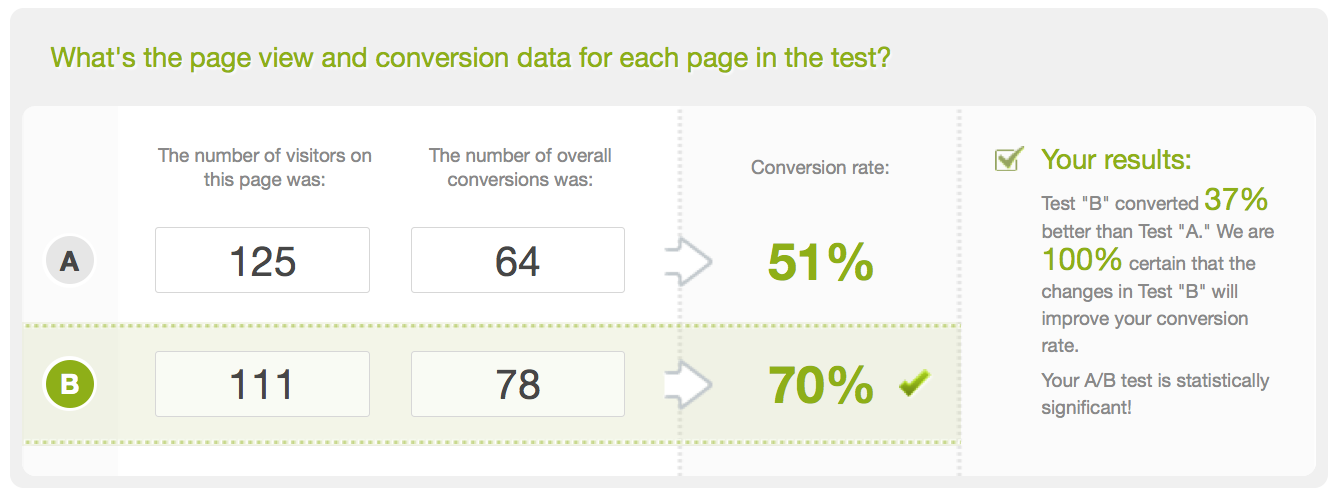

Underneath “None” are our A/B test pages. We have our variant and the original. The variant page generated 125 signups, while the original delivered 111 signups. Also, the variant delivered a much smaller percentage of cancellations.

Before we can say the variant is a winner, we’ll need to make sure this data is statistically significant. We can use the A/B test significance calculator to get this data. Here’s how it looks:

On the right, we see that this data is statistically significant. We can move forward with full confidence knowing that the variant page is the real winner. Customers who saw this page will be less likely to cancel later on down the road.

A Word of Caution

While this data is valuable, it’s important to be careful with the conclusions you reach.

While the original/variant page did lead to more cancellations and it is statistically significant, can we be 100% no-doubt-about-it absolutely sure that this is the reason more people canceled? No, we cannot. Correlation is not causation. It could just be happenstance that those shown the original/variant page canceled at a higher rate than those shown the variant/original – and the original/variant page should not be blamed.

This also doesn’t mean the data is worthless. Perhaps the original/variant page made a promise that the product couldn’t live up to. Or maybe the original/variant page attracted the wrong audience. To find this out, we can collect data from our canceled customers and see if we find trends. If not, it may just be coincidence.

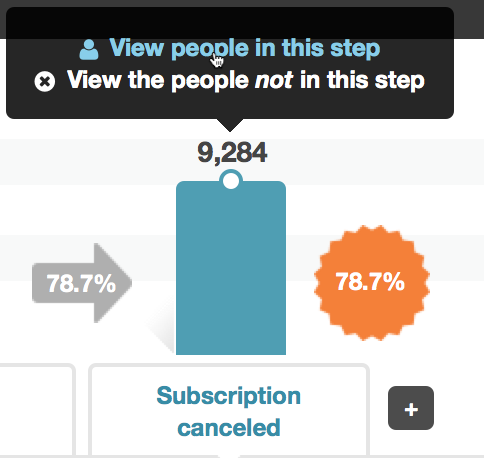

We can find the canceled customers in Kissmetrics. Here’s how:

We’ll click to view the people in the “Subscription canceled” step and view the people in the variant segment. From there, we’ll get our list of people, and we can contact them to learn why they canceled.

Track Tests with the A/B Test Report

Kissmetrics has a report that tracks your A/B tests. It’s called the A/B Test Report, and it has some unique features.

This report does not create tests for you. You can still use Optimizely, VWO, Unbounce, etc. for that. It does track the test, report the results, and import the data into your Kissmetrics account. There are a few additional benefits of using this report:

- The statistical significance calculator is built in. You’ll see the amount of data that came in, and the Report will draw a conclusion for you (e.g., variant beat original). Its calculator will ensure the results are statistically significant, which can reduce the odds of false positives.

- You can see how an A/B Test impacts any part of your funnel. Want to see how a homepage headline test impacts signups? No problem, just set “signups” as your conversion event. You aren’t limited to testing only to the next conversion step.

Here’s how the report looks:

You’ll get multiple metrics for each variant, and even be able to see every person in each variant.

Click here to watch a video demo of the A/B Test Report.

Get the Whole Picture with Kissmetrics

It would be impossible to get this kind of data if you weren’t using a tool like Kissmetrics.

A benefit to using a people-tracking platform like Kissmetrics is that each visitor to your site is recorded as what they are – a person, not a session. This makes your data accurate.

If you’re running an A/B test, each person gets tagged with the pages they saw, and you’ll be able to go back and see which pages/steps they went through, as we just did. With Kissmetrics, you can see the entire customer journey, from when the prospect first visited (and which variant they saw) to the customer’s most recent action. As long as you have the tracking in place, you can get the important data you need.

To see how Kissmetrics can benefit your marketing, signup for a personal demo today. To get right into it, you can signup for a 14-day Kissmetrics trial.

About the Author: Zach Bulygo (Twitter) is a Content Writer for Kissmetrics.

No comments